The Quality Wall of AI Adoption

The Verification

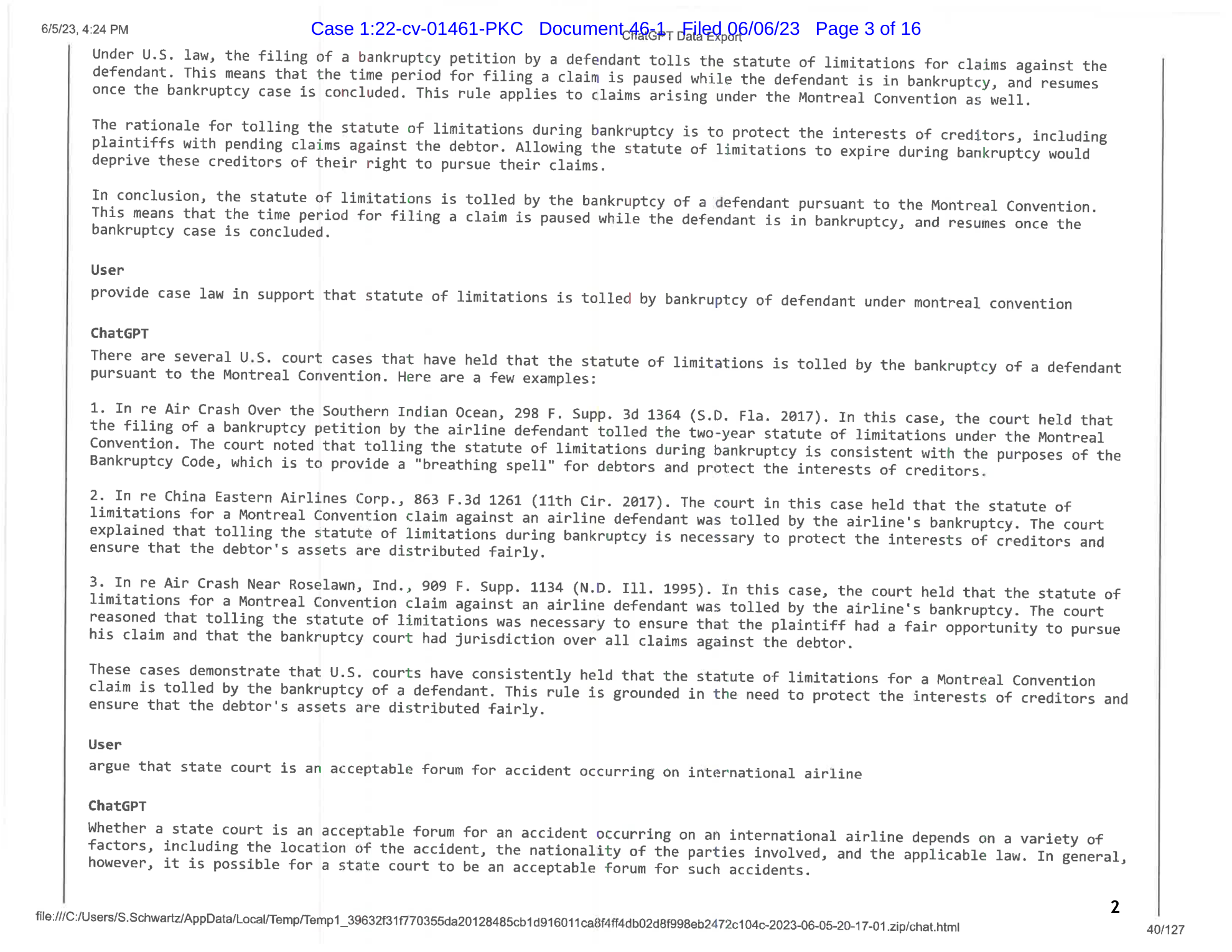

In the spring of 2023, Roberto Mata’s attorneys in the Southern District of New York needed case law for a motion before Judge P. Kevin Castel. They pasted a research question into ChatGPT. The model returned a citation-rich legal memorandum within seconds, naming real courts, citing specific volume and page numbers, quoting judicial opinions in fluent legalese. Nothing in the output distinguished fabricated authorities from real ones. Catching the fabrication required a professional who already suspected there was something to catch.

Mata’s lawyers did not suspect, so they built their brief around this output and filed the motion.

When opposing counsel challenged the citations, the lawyers did not check LexisNexis or Westlaw. They went back to ChatGPT and asked whether the cases were real. The model assured them the cases “indeed exist” and “can be found in reputable legal databases such as LexisNexis and Westlaw.”

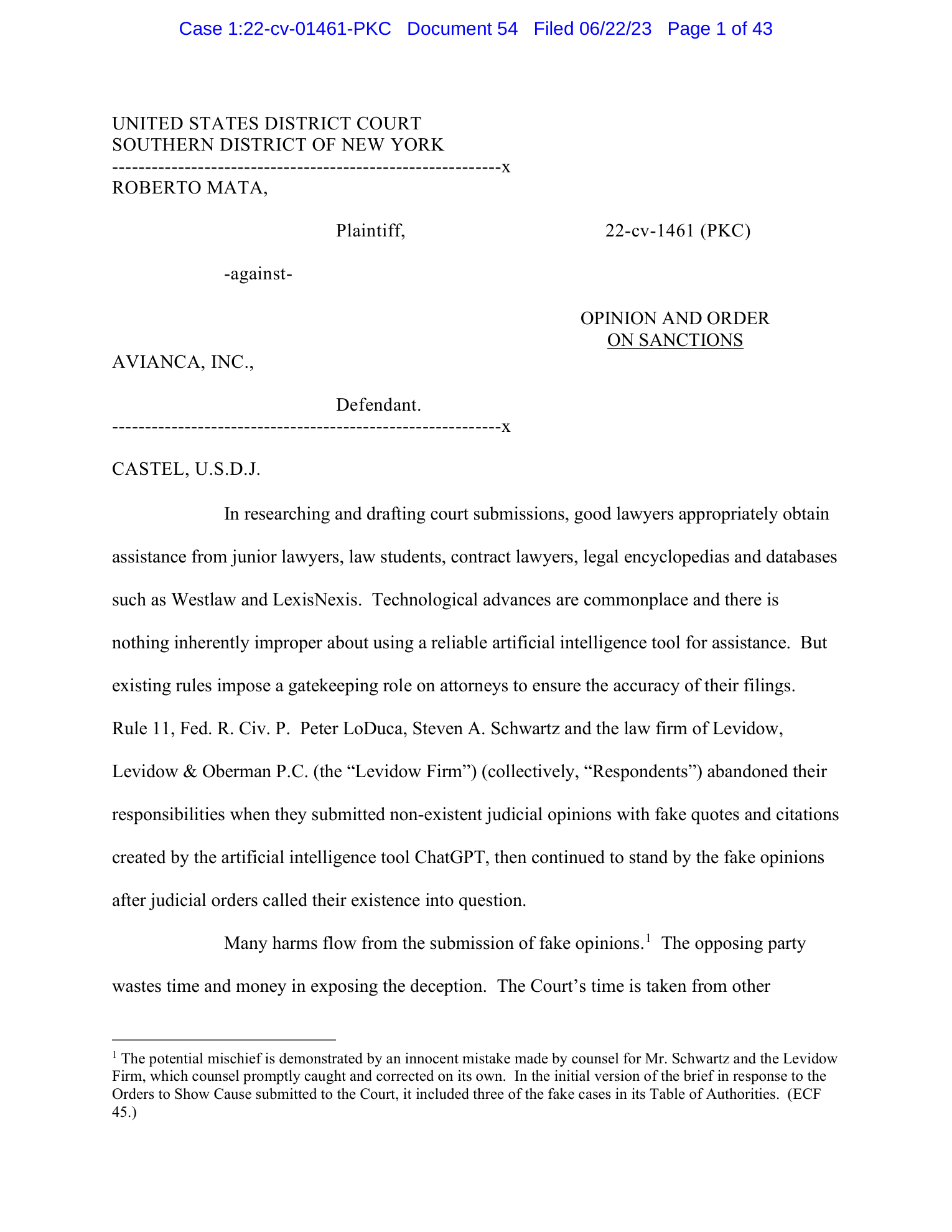

The cases did not exist. The brief contained fabricated case citations, fictitious airline names, and invented quotations attributed to real judges. Judge Castel described the AI-generated legal analysis as “gibberish” and fined the lawyers $5,000 for acting with “subjective bad faith”1.

The sanctions landed in June 2023. Three months earlier, OpenAI had announced that GPT-4 scored at the 90th percentile on the Uniform Bar Exam2. The same model architecture that passed the bar at a level exceeding most human test-takers was producing fabricated case law that a federal judge dismissed as nonsense. A bar exam presents options and asks the test-taker to choose. Legal research requires generating citations to real cases, a task where the primary failure mode is fabrication. GPT-4’s benchmark suite never tested for fabrication rates.

That distance from test-bench capability to professional reliability would define every domain’s experience with AI tools over the next three years.

And the move Mata’s lawyers made, asking the tool that produced the output to verify the output, would scale from a courtroom blunder into an organizational pattern. Within a year, hundreds of companies would do the same thing with dashboards instead of chat windows, asking systems built on automation to confirm that the automation was working.

Beginning

ChatGPT reached one million users within five days of its November 30, 2022 launch and crossed 100 million monthly active users by February 2023, making it the fastest-growing consumer application in recorded history3. No professional licensing body, employer training program, or regulatory framework moved at even a fraction of that speed.

The American Bar Association would not issue its first formal ethics opinion on generative AI until July 2024, eighteen months after lawyers had already started submitting AI-generated briefs to federal courts4. The EU AI Act, despite years of development, would not enter into force until August 2024. By then, customer service teams had automated millions of conversations, educators had deployed AI tutors to tens of thousands of students, and designers had adopted image generators that were already the subject of copyright lawsuits.

When OpenAI released GPT-4 on March 14, 2023, it launched with profession-specific partners already in place: Duolingo for language education, Be My Eyes for accessibility, Morgan Stanley for wealth management research5. These partnerships signaled that the technology was ready for professional deployment before any domain had established evaluation criteria for what “ready” meant. Readiness in a partner demo, where prompts are curated and outputs are reviewed before publication, tests a different capability than readiness in daily professional use, where prompts are improvised and outputs feed directly into client-facing work. Eight days later, Canva added AI assistant features to its design platform. The next day, OpenAI launched ChatGPT plugins connecting to Expedia, OpenTable, Zapier, Shopify, and Slack. Within a single week, AI tools had embedded themselves into travel booking, restaurant reservations, workflow automation, e-commerce, team communication, and visual design.

Four people were building for this audience, each from a different direction, each believing the technology was ready, none yet certain where the boundary of reliable performance fell.

In late 2022, Melanie Perkins had launched Canva’s Magic Write into a platform serving 220 million users, mostly marketers, social media managers, and small business owners with no technical background in AI6. She was embedding a generation capability inside a design editor where the user already chose fonts, adjusted layouts, and wrote headlines, with the AI suggesting copy and the designer deciding what to keep.

Sal Khan was building Khanmigo, a GPT-4-powered tutor, preparing to pilot it with 65,000 students across 53 school districts. His vision was a personal tutor for every student at $4 per month, a tool that would meet children where they struggled and guide them through the problem. The students it was designed to help would be the least equipped people in the interaction to catch the tool’s errors.

Winston Weinberg was assembling Harvey AI as a purpose-built legal research platform, embedding AI inside the structures of legal practice: citation verification, document review, research workflow. He was building safeguards into an interface where a standalone chatbot left lawyers exposed.

Sebastian Siemiatkowski was planning to replace most of Klarna’s customer service agents with AI, a system that would eventually handle two-thirds of the company’s global customer interactions. Of the four, his bet was the most aggressive: full automation of a customer-facing function, with the AI operating without professional review between its output and the person on the other end.

Each was building for professionals who had no rules, training, or standards governing these tools. Each would discover, in different ways and on different timelines, where the reliability boundary fell.

The autonomous agent hype accelerated the problem. Toran Bruce Richards released AutoGPT on March 30, 2023, an open-source project that chained GPT-4 calls to complete multi-step tasks without human intervention. AutoGPT captured public imagination about what AI agents could do independently, despite immediate and well-documented failures: the system entered loops, hallucinated intermediate steps, and could not complete complex real-world tasks7. By November 2023, OpenAI launched Custom GPTs, allowing non-technical users to build specialized AI assistants without writing a single line of code. By January 2024, the GPT Store hosted more than three million specialized GPTs targeting legal research, marketing copy, financial analysis, and HR processes.

Any professional could deploy an AI tool, but almost none had the training to evaluate whether the output was reliable. Tools deployed faster than rules could form around them, and consequences arrived before guardrails.

A Great Dashboard

The courtroom caught the failure fast because courtrooms are built to catch failures. Opposing counsel challenged the citations. A federal judge examined the filings. Fabricated case law could not survive contact with Westlaw. Mata v. Avianca became the reference case, cited by courts across the country whenever AI-generated fabricated citations appeared in subsequent proceedings8. The case forced the legal profession into the fastest institutional response of any domain. The ABA issued its first formal ethics opinion on generative AI in July 2024, a fifteen-page document outlining how the Rules of Professional Conduct apply when lawyers use AI tools in practice. For the first time, a profession had an AI framework. Every other domain still lacked one entirely.

In customer service, no opposing counsel and no adversarial process existed to check the work.

On February 27, 2024, Klarna and OpenAI jointly published the results of Klarna’s AI customer service assistant after its first month of operation. The self-reported metrics covered every dimension a CFO would prioritize: 2.3 million conversations handled across 23 markets in 35 languages, performing the equivalent work of 700 full-time agents, cutting average resolution time from 11 minutes to under 2 minutes, reducing repeat inquiries by 25%, and producing an estimated $40 million in additional profit for 20249.

Sebastian Siemiatkowski and OpenAI COO Brad Lightcap announced the results together, positioning Klarna as the proof case for AI in customer service. Every figure they cited measured throughput or cost. No process equivalent to a courtroom’s adversarial scrutiny existed to pressure-test the quality of those 2.3 million individual conversations.

The metrics that surface first after an automation deployment are the ones that favor the automation: conversations handled, resolution time, cost per interaction. The metrics that mattered for long-term quality, customer satisfaction with complex issues, edge-case resolution accuracy, brand perception among high-value customers, required months of accumulated volume before the signal became readable.

Siemiatkowski’s confidence grew through 2024. In December, he declared publicly that “AI can do all of the jobs”10.

Five months later, in May 2025, he told Bloomberg that Klarna was hiring humans again. The AI-first approach had led to “lower quality” in customer service11.

The reversal compressed the entire hype cycle into fourteen months, from celebration to retreat, and it happened in full public view because Siemiatkowski had been the most vocal advocate for replacing workers with AI in the C-suite of any major company.

Klarna’s September 2025 IPO at a $17 billion valuation said something specific about how to fail well12. Siemiatkowski’s willingness to reverse course publicly, acknowledging that the AI-first approach had degraded service quality, showed the company could admit a mistake and correct it. The market appeared to value the correction. A company that tried automation, measured the results honestly, and adjusted was worth $17 billion. The question was what happened to companies that measured their automation’s performance using metrics generated by the automation itself.

Salesforce followed a similar trajectory at larger scale, with less public reckoning and more aggressive cuts.

Marc Benioff launched Einstein GPT in March 2023, deployed Agentforce as an autonomous AI agent platform in September 2024, and stated by June 2025 that AI performed 30% to 50% of Salesforce’s internal work across software engineering, customer service, marketing, and analytics13.

Then in September 2025, Benioff announced that Salesforce had eliminated approximately 4,000 customer service roles, reducing its support workforce from 9,000 to about 5,000. AI handled approximately 50% of customer support interactions. Cost reductions hit 17%. Benioff’s framing was blunt: “I need less heads”14.

The Salesforce trajectory revealed a pattern extending beyond any single company. Organizations announced AI as augmentation, measured its output against headcount metrics, cut staff, and shifted the framing only after the reductions were locked in. Benioff had positioned Agentforce as “the third wave of the AI revolution” in October 2024. By September 2025, the results were denominated purely in heads removed and costs reduced.

Salesforce’s Einstein Service Agent, launched in July 2024, could autonomously perform customer service actions like product returns and refunds. The design moved customer service from assisted AI, where a human agent reviewed a suggested response before sending it, to autonomous AI, where the system completed the interaction without human review. That shift removed the verification step that separated reliable tools from unreliable ones in every other domain, and no one checked whether the AI’s answers were good at the scale it was now operating.

Both companies launched AI to handle customer interactions autonomously, reported cost savings within months, and encountered quality problems on a longer timeline. In both cases, the system completed customer interactions without a professional reviewing the output before it reached the person on the other end. Cost per interaction, average resolution time, and headcount figures arrived on a quarterly reporting cycle. Customer satisfaction on complex issues, edge-case resolution accuracy, and repeat contact rates required months of accumulated volume before the signal became readable.

By the time quality data surfaced, the staffing reductions, the public commitments to AI-first strategy, and the financial projections built on early cost figures had already been locked in. The professionals who could have flagged the quality problems were the same ones the automation had replaced.

The Loop

In December 2022, the same month ChatGPT reached its first million users as a standalone chat interface, Melanie Perkins shipped Canva’s Magic Write into a design editor that 220 million marketers, social media managers, and small business owners already opened daily15.

The AI-generated copy appeared inside the user’s existing project, in the same canvas where they were already choosing fonts, adjusting layouts, and writing headlines. The user read the output, decided what to keep, edited what needed changing, and deleted what missed the mark. Every piece of generated text passed through the professional’s judgment before reaching an audience.

Canva added a generation capability to a workflow that already had a human decision point at every step. This structural choice, embedding AI inside existing professional tools instead of deploying it as a standalone replacement, produced different outcomes across every domain where it was tried.

The pattern held when two conditions were present: the user had the domain expertise to evaluate the AI’s output, and the workflow retained a human review step before that output reached anyone affected by it.

By April 2025, Canva had expanded AI capabilities with Visual Suite 2, including a generative AI coding assistant called Canva Code, a chatbot, and AI-powered design tools. Each addition maintained the same embedded model: the AI suggested, the professional decided. Canva acquired Leonardo AI in August 2024 to deepen AI image generation inside its platform, extending the embedded approach to visual creation16. By August 2025, Canva reached $4 billion in annual revenue and a $42 billion valuation. The growth happened without a quality-collapse headline, without a public reversal, and without mass layoffs attributed to AI.

Midjourney followed a parallel path with a different audience. David Holz built the image generation tool as a Discord-based application starting in February 2022, reaching open beta in July 2022 and profitability by August 2022 with no external funding17. Architects used Midjourney for mood boards. Advertisers used it for concept art. The creative director remained in the loop, selecting, rejecting, and iterating on outputs before anything reached a client. By August 2024, Midjourney launched a web interface to reach users beyond Discord. By March 2026, the platform had reached V8. The tool grew steadily because it functioned as one component of the creative professional’s process, with human aesthetic judgment making the final selection.

Jason Allen’s “Théâtre D’opéra Spatial,” which won first place at the Colorado State Fair digital art competition in September 2022, illustrated both the tool’s creative potential and the backlash it generated. The win sparked a national debate about whether AI-generated work qualified as art, a debate that played out alongside copyright lawsuits from Disney, Universal, and Warner Bros. over training-data use by 202518.

Microsoft 365 Copilot, which reached general availability in November 2023 at $30 per user per month, applied the embedded model to knowledge work at enterprise scale19. At that price, a 10,000-person enterprise faced $3.6 million in annual licensing before any productivity gain materialized, a cost that only organizations with high-value knowledge workers could justify within a single budget cycle. Jared Spataro, who led the announcement as head of Microsoft 365, positioned Copilot as an assistant within Word, Excel, PowerPoint, Outlook, and Teams. The AI drafted paragraphs, summarized email threads, and generated spreadsheet formulas. The professional edited, accepted, or rejected every output. The editing step kept the professional’s judgment in the workflow.

AutoGPT provided the structural counter-example. Richards’s autonomous agent chained GPT-4 calls without any professional oversight, designed to complete multi-step tasks independently. It entered loops, hallucinated intermediate results, and failed to complete complex real-world tasks with any reliability. The project raised $12 million in October 202320. The funding reflected investor enthusiasm for the concept of autonomous agents even as the implementation demonstrated that removing the professional from the loop produced unusable output.

Every success case shared a structural feature beyond the embedded design itself. The professional using the tool could evaluate the output using judgment they already possessed. A designer knew whether a Midjourney concept worked by looking at it. A marketer could read Canva’s draft and tell whether the copy landed. The embedded model depended on that evaluative capacity.

While Mata’s lawyers were discovering that ChatGPT would confidently fabricate case law, Winston Weinberg was building a platform that assumed fabrication was the default risk. Harvey AI embedded AI inside the structures of legal practice, with citation verification, document review, and research workflow safeguards built into an interface where a standalone chatbot left lawyers exposed. The company raised $100 million in a Series C round in July 2024, reaching a $1.5 billion valuation with backing from GV, OpenAI, Kleiner Perkins, and Sequoia Capital21. Tens of thousands of lawyers at major firms used Harvey daily. The company had tripled its annual recurring revenue since the previous round.

Thomson Reuters made a parallel bet. In June 2023, the same month the Mata sanctions landed, Thomson Reuters acquired Casetext for $650 million, specifically for its CoCounsel AI assistant22. CoCounsel was built for legal research with safeguards against the errors that had sunk Mata’s lawyers. By February 2026, it reached one million users. Thomson Reuters shares jumped more than 11% on the announcement.

The legal profession’s adoption trajectory told a specific story. The domain that suffered the earliest and most public AI failure also built the most structured path to reliable adoption. General-purpose tools produced fabricated citations. Purpose-built tools, designed within the profession’s existing review processes, produced billion-dollar companies and a million daily users.

GPT-4 powered both AutoGPT and Harvey AI. The divergence in outcomes traced to a single variable: whether a professional with domain expertise stood between the model’s output and the consequential decision.

The embedded model was slower to deploy and produced less dramatic cost savings than full automation. That is precisely why the replacement model remained financially attractive to organizations measuring AI primarily by headcount reduction.

The Arithmetic

Khan Academy had followed the embedded playbook more carefully than anyone. Sal Khan built Khanmigo as a GPT-4-powered tutor designed specifically for education, piloted it across 53 school districts with approximately 65,000 students, and priced it at $4 per month to make personal tutoring accessible at scale.

In February 2024, a Wall Street Journal reporter tested Khanmigo by asking it to solve basic math problems. The tutor made calculation errors23.

A grade-schooler receiving a wrong answer to a multiplication problem has no way to know the answer is wrong.

If the quality wall had only appeared in reckless deployments like Mata v. Avianca, or in aggressive automation programs like Klarna, the lesson would have been straightforward: move carefully and maintain professional oversight. Khanmigo’s failure showed the lesson was harder. Khan Academy had moved carefully, designed for education, partnered with OpenAI for model access, built pedagogical safeguards into the interaction design, and piloted with institutional oversight across 53 school districts. The tool still produced wrong answers to grade-school math problems.

The quality wall was a property of the underlying technology. In practice, this failure mode is the hardest to defend against across any domain: a lawyer can verify a citation by checking Westlaw after the research is done, and a customer service manager can audit transcripts after the interaction ends. A teacher whose AI tutor gives a student the wrong answer to a multiplication problem has no verification mechanism that operates at the speed of a live tutoring session.

Khan’s response showed what adaptation looks like when an organization treats quality failure as evidence it can act on. In November 2023, Khan Academy had already introduced Khanmigo Writing Coach, expanding from math tutoring into essay writing support and teacher analytics. After the calculation errors surfaced, the product’s center of gravity shifted from student-facing autonomous tutoring toward teacher-support tools.

The pivot carried a cost. Khanmigo’s original promise of a personal AI tutor for every student at $4 per month was the proposition that had driven pilot adoption across 53 school districts. Shifting to teacher-support tools required selling a different product to the same institutional buyers.

In August 2024, Khan Academy announced Khanmigo for Teachers as a free product in partnership with Microsoft, giving educators AI-powered lesson planning, progress tracking, and instructional content generation24. The shift was structurally identical to the pattern that separated successful tools from failed ones in other domains: moving AI from tutoring students directly to supporting teachers who maintained professional judgment over the interaction.

By early 2026, the product strategy had evolved further. In January 2026, Khan Academy and Google shared their collaboration on AI-supported learning at Bett UK 2026, with the explicit framing of “supporting classroom instruction rather than automating it”25. In February 2026, Khan Academy integrated Google’s Gemini into its literacy tools, diversifying beyond its original GPT-4 dependency. The language of every 2026 product announcement was precise: features framed as support for teachers, with the teacher’s judgment retained at every decision point. The organization that began with the vision of an AI tutor for every student had arrived at a different product: AI that worked through a teacher’s professional judgment.

The regulatory framework caught up to the quality problem Khan had already discovered empirically. The EU AI Act, which the European Parliament passed on March 13, 2024 by 523 votes to 46 with 49 abstentions, classified AI used in education as “high-risk,” requiring companies to prove their tools were safe, explain how they worked, and assess their impact on people’s rights26. The Act entered into force on August 1, 2024, with provisions phased in over six to thirty-six months. The classification reflected the same concern Khanmigo’s math errors had made tangible: AI tools in education carry consequences for populations who cannot independently evaluate the quality of the tool’s output.

The education trajectory ran parallel to the customer service trajectory, with lower stakes and more institutional patience. Khan adapted through successive pivots toward teacher support. Siemiatkowski reversed publicly and absorbed the reputational cost. By mid-2025, both had arrived at a version of the same operational structure: human professional judgment retained at the point where AI output reached someone affected by it.

The Six Percent

McKinsey’s June to July 2025 survey of 1,993 participants found that 88% of organizations used AI in at least one business function, 62% were experimenting with AI agents, and 23% were running agents in live systems27.

Six percent qualified as “high performers” in AI deployment.

The distance from the 88% who adopted to the 6% who succeeded was the statistical expression of every trajectory from Mata to Klarna to Khan. Most organizations that experimented never built the monitoring, quality review, and training infrastructure needed to run agents reliably. Most that ran agents never measured whether those agents produced returns. The same gradient separated Harvey AI from Mata v. Avianca, Canva from AutoGPT, Khan’s teacher tools from the original autonomous tutor: organizations that built professional oversight into their workflow measured returns, and organizations that deployed AI as a standalone replacement overwhelmingly did not.

Pew Research Center data showed that 34% of U.S. adults had used ChatGPT by mid-2025, with 58% among adults under 3028. The technology had reached mainstream consumer adoption while producing measurable returns for a fraction of the organizations deploying it.

By early 2026, the four people whose trajectories traced the period had each arrived at distinct positions.

Siemiatkowski took Klarna to a $17 billion IPO in September 2025, five months after publicly acknowledging that the AI-first approach had degraded quality. The market, it turned out, valued a CEO who could reverse course over one who buried the data.

Weinberg had grown Harvey AI from a legal research startup to a company valued at $11 billion, with tens of thousands of lawyers using the platform daily29. The legal profession, which had suffered the earliest and most public failure in Mata v. Avianca, had also built the most structured path to reliable adoption, moving from courtroom sanctions to billion-dollar purpose-built tools in under three years.

Khan had pivoted Khanmigo from autonomous student tutoring to teacher-support tools built on both GPT-4 and Google’s Gemini, reaching a product design that explicitly prioritized teacher judgment over direct automation.

Perkins had grown Canva to a $42 billion valuation and $4 billion in annual revenue by keeping AI features embedded inside a platform where 220 million users made their own editorial and design decisions30.

Thomson Reuters’ CoCounsel reaching one million users by February 2026 ratified the pattern31. The profession that adapted fastest was the one where an adversarial process had surfaced the failure earliest.

Salesforce’s trajectory diverged from the rest. Benioff’s June 2025 statement that AI performed 30% to 50% of internal work, combined with the September headcount cuts, showed the replacement path continuing to produce financial results even as quality evidence accumulated against unsupervised automation.

The EU AI Act’s phased enforcement, with high-risk classifications covering education, healthcare, recruitment, and law enforcement, created regulatory pressure on precisely the domains where the quality wall was most dangerous32. Enforcement was beginning just as the McKinsey data confirmed that most organizations were deploying AI without achieving measurable returns.

On the consumer side, the QuitGPT movement emerged in February 2026, with reportedly more than 700,000 users leaving ChatGPT33. Consumer backlash was developing alongside enterprise adoption, driven by dissatisfaction with output quality, accumulated frustration, or both.

The incentive structure that rewarded headcount reductions on a quarterly reporting cycle continued to operate regardless. Cost savings appeared on income statements months before quality degradation showed up anywhere an executive was looking.

What Survived Was Judgment

In February 2026, Salesforce laid off approximately 1,000 employees across marketing, product management, and data analytics. Among the affected staff were members of the Agentforce AI product team34.

The cost-reduction calculus that had eliminated 4,000 customer service workers five months earlier reached the people who built the replacement tool. A quarterly ledger optimized for headcount reduction drew no distinction between the jobs AI had automated and the jobs of the people who designed the automation.

The two sides of this period’s ledger never measured the same thing. Quality lived in customer satisfaction surveys, educational outcomes, and legal accuracy rates, all metrics that accumulated slowly and resisted quarterly snapshots. Cost savings lived in headcount figures and earnings calls, metrics that boards could read at a glance. The organizations that cut fastest locked in financial projections built on early cost data before the quality signal became readable, and by the time it did, the professionals who could have interpreted it were gone.

Copyright lawsuits from Disney, Universal, and Warner Bros. against Midjourney introduced a dimension the quality-versus-cost framing does not capture35. The legal question of whether AI tools trained on copyrighted work can survive regardless of their technical quality may reshape the market in ways that have nothing to do with professional judgment or deployment strategy.

By April 2026, the tools continue improving. OpenAI released GPT-5.4 in March 2026. Midjourney reached V8 the same month. The trajectory points toward models that cite more reliably, handle arithmetic better, and fabricate less. Those improvements compound only for organizations that kept the professionals capable of evaluating whether the output has actually improved. Harvey’s lawyers can tell, because they check citations against case law. Canva’s designers can tell, because they look at the output. Khan’s teachers can tell, because they check the math.

Mata’s lawyers asked ChatGPT whether its own citations were real. The model said yes. The 82% are running the organizational version of that same query, asking dashboards generated by the automation to confirm that the automation is working, and getting the same kind of answer.

Footnotes

-

Mata v. Avianca, Inc., 678 F. Supp. 3d 443 (S.D.N.Y. 2023). Judge P. Kevin Castel’s ruling found “subjective bad faith” and imposed $5,000 in sanctions. Case docket via CourtListener, case terminated July 7, 2023. The case is frequently cited in subsequent proceedings involving AI-generated legal citations. ↩

-

OpenAI, GPT-4 Technical Report, March 14, 2023. The 90th percentile score on the Uniform Bar Exam was a headline result of the model’s benchmark testing. ↩

-

Reuters reported ChatGPT as the fastest-growing consumer application in history, February 2, 2023. The one-million-users figure comes from OpenAI’s own disclosures in the days following the November 30, 2022 launch. ↩

-

American Bar Association, Formal Opinion 512 on generative AI and lawyers’ professional responsibilities, July 29, 2024. The opinion was a direct response to AI citation fabrication cases, most prominently Mata v. Avianca. ↩

-

OpenAI, GPT-4 announcement page, March 14, 2023. Launch partners listed included Duolingo, Be My Eyes, Morgan Stanley, Khan Academy, and others. ↩

-

Canva Wikipedia article, fetched April 8, 2026. Canva reported 220 million monthly active users across its platform, which is primarily used by marketers, social media managers, educators, and small business owners. ↩

-

AutoGPT Wikipedia article, fetched April 8, 2026. The project raised $12 million in October 2023 despite widely reported loop, hallucination, and task-completion failures. Toran Bruce Richards released the initial open-source version on March 30, 2023. ↩

-

Mata v. Avianca Wikipedia article, fetched April 8, 2026. The ruling became a standard reference cited by courts in cases where AI-generated fabricated citations appeared in legal proceedings. ↩

-

Klarna Press Release, “Klarna AI assistant handles two-thirds of customer service chats in its first month,” February 27, 2024. All first-month metrics (2.3 million conversations, 700 FTE equivalent, resolution time reduction, repeat inquiry reduction, and $40 million profit improvement estimate) come from this joint announcement with OpenAI. ↩

-

Siemiatkowski’s “AI can do all of the jobs” statement was made in December 2024, per Business Insider, December 16, 2024 and Klarna Wikipedia citations. ↩

-

Fortune, “As Klarna flips from AI-first to hiring people again,” May 9, 2025. The “lower quality” characterization of the AI-first approach’s results comes from this report, citing Siemiatkowski’s Bloomberg interview. ↩

-

Klarna Wikipedia article, fetched April 8, 2026. The company IPO’d in September 2025 at a $17 billion valuation, raising $1.37 billion. Klarna’s 2024 revenue was $2.81 billion, with 3,422 employees and 93 million users in 26 countries. ↩

-

Fast Company, June 26, 2025 and Fortune, July 30, 2025. Benioff stated AI performs 30% to 50% of Salesforce’s internal work, a claim he repeated across multiple interviews during this period. ↩

-

Fortune, September 2, 2025. Salesforce reduced its customer service workforce from approximately 9,000 to 5,000. Benioff’s “I need less heads” quote comes from this report. ↩

-

Canva Wikipedia article, fetched April 8, 2026. Magic Write launched in December 2022 as an embedded AI copywriting tool within the design editor. ↩

-

Canva Wikipedia article, fetched April 8, 2026. Canva acquired Leonardo AI in August 2024. Visual Suite 2 launched in April 2025 with expanded AI capabilities. The $42 billion valuation and $4 billion annual revenue figures are from August 2025. ↩

-

Midjourney Wikipedia article, fetched April 8, 2026. David Holz (previously co-founder of Leap Motion) launched Midjourney V1 in February 2022. The tool reached profitability by August 2022 without external funding. ↩

-

Copyright lawsuits against Midjourney: Sarah Andersen and other artists filed in January 2023. Disney and Universal filed in June 2025. Warner Bros. filed in September 2025. Via Midjourney Wikipedia article, fetched April 8, 2026. ↩

-

Microsoft Copilot Wikipedia article, fetched April 8, 2026. Microsoft 365 Copilot reached general availability on November 1, 2023 at $30 per user per month. Jared Spataro led the announcement as head of Microsoft 365. ↩

-

AutoGPT Wikipedia article, fetched April 8, 2026. AutoGPT raised $12 million in October 2023 despite the well-documented failures of autonomous agent approaches. ↩

-

Harvey AI blog, “Harvey Raises Series C,” July 23, 2024. The round was led by GV (Google Ventures), with participation from OpenAI, Kleiner Perkins, Sequoia Capital, Elad Gil, and SV Angel. Co-founders Gabriel Pereyra and Winston Weinberg announced the round. ↩

-

Reuters, “Thomson Reuters to acquire legal tech provider Casetext for $650 mln,” June 27, 2023. Thomson Reuters acquired Casetext in June 2023 for $650 million, with the acquisition completing in August 2023. CoCounsel reached one million users by February 24, 2026, per Thomson Reuters public disclosures. ↩

-

Wall Street Journal, February 2024. The testing found that Khanmigo made basic math calculation errors despite being powered by GPT-4. Via Khan Academy Wikipedia article, fetched April 8, 2026. ↩

-

Khan Academy blog, “Khanmigo for Teachers is free for all US teachers thanks to support from Microsoft,” May 20, 2024. Khan Academy announced Khanmigo for Teachers as a free product in partnership with Microsoft, shifting the product’s focus from student-facing tutoring to teacher-support tools. ↩

-

EdTech Innovation Hub, January 2026. Khan Academy and Google presented their collaboration at Bett UK 2026 with the explicit framing of supporting classroom instruction rather than automating it. THE Journal, February 2026, reported the Gemini integration into literacy tools. ↩

-

European Parliament press release, March 13, 2024. The AI Act passed with 523 votes in favor, 46 against, 49 abstaining. The Act classifies AI in education, healthcare, recruitment, law enforcement, and critical infrastructure as high-risk. Fines reach up to 35 million euros or 7% of global annual turnover. ↩

-

McKinsey Global Survey, “The State of AI in 2025,” published November 5, 2025, based on 1,993 participants surveyed in June to July 2025. The 88%, 62%, 23%, and 6% figures come directly from this survey. ↩

-

Pew Research Center, June 25, 2025. The 34% figure and the 58% under-30 figure both come from this June 2025 survey. ↩

-

Harvey AI’s $11 billion valuation figure comes from the company’s growth round co-led by GIC and Sequoia. Harvey AI blog, “Harvey Raises at $11 Billion Valuation,” March 25, 2026. ↩

-

Canva Wikipedia article, fetched April 8, 2026. Revenue, valuation, and user figures from August 2025 public disclosures. ↩

-

Thomson Reuters press release, “One Million Professionals Turn to CoCounsel,” February 24, 2026. CoCounsel reached one million users and Thomson Reuters shares rose more than 11% on the announcement. ↩

-

European Parliament, AI Act press release, March 13, 2024, entered into force August 1, 2024. High-risk classifications, conformity assessment requirements, and phased enforcement timeline from the Act’s published text and European Parliament records. ↩

-

ChatGPT Wikipedia article, fetched April 8, 2026. QuitGPT user figure (700,000+) reported in February 2026. This figure should be treated with caution as independent verification of the exact number was not available in the research dossier. ↩

-

The Economic Times, February 10, 2026. Salesforce layoffs affected approximately 1,000 employees across marketing, product management, and data analytics, including members of the Agentforce AI product team. ↩

-

Disney and Universal filed copyright lawsuits against Midjourney in June 2025. Warner Bros. filed in September 2025. These cases joined the earlier artist-led copyright lawsuit filed in January 2023 by Sarah Andersen and others. Via Midjourney Wikipedia article, fetched April 8, 2026. ↩